Teaching Neural Nets to Identify Planes and Discover Music Genres

02 Dec 2018 · 2 min readI’ve been working on overhauling Facebook’s recommendation systems at work, but it’s mostly been at the product level. Over Thanksgiving I decided to get hands-on and see how far I could get with deep learning myself. There’s a ton of great tutorials on using Python and PyTorch these days, and I’m blown away by how accessible the state of the art has become. Both of these models were built and trained on my desktop PC.

Airplane Classifier

I scraped images from Airliners.net and trained a convolutional neural network to distinguish between different commercial aircraft types like Boeing 737s, Airbus A320s, 747s, and others. It achieved 93% accuracy, which is better than I can do myself.

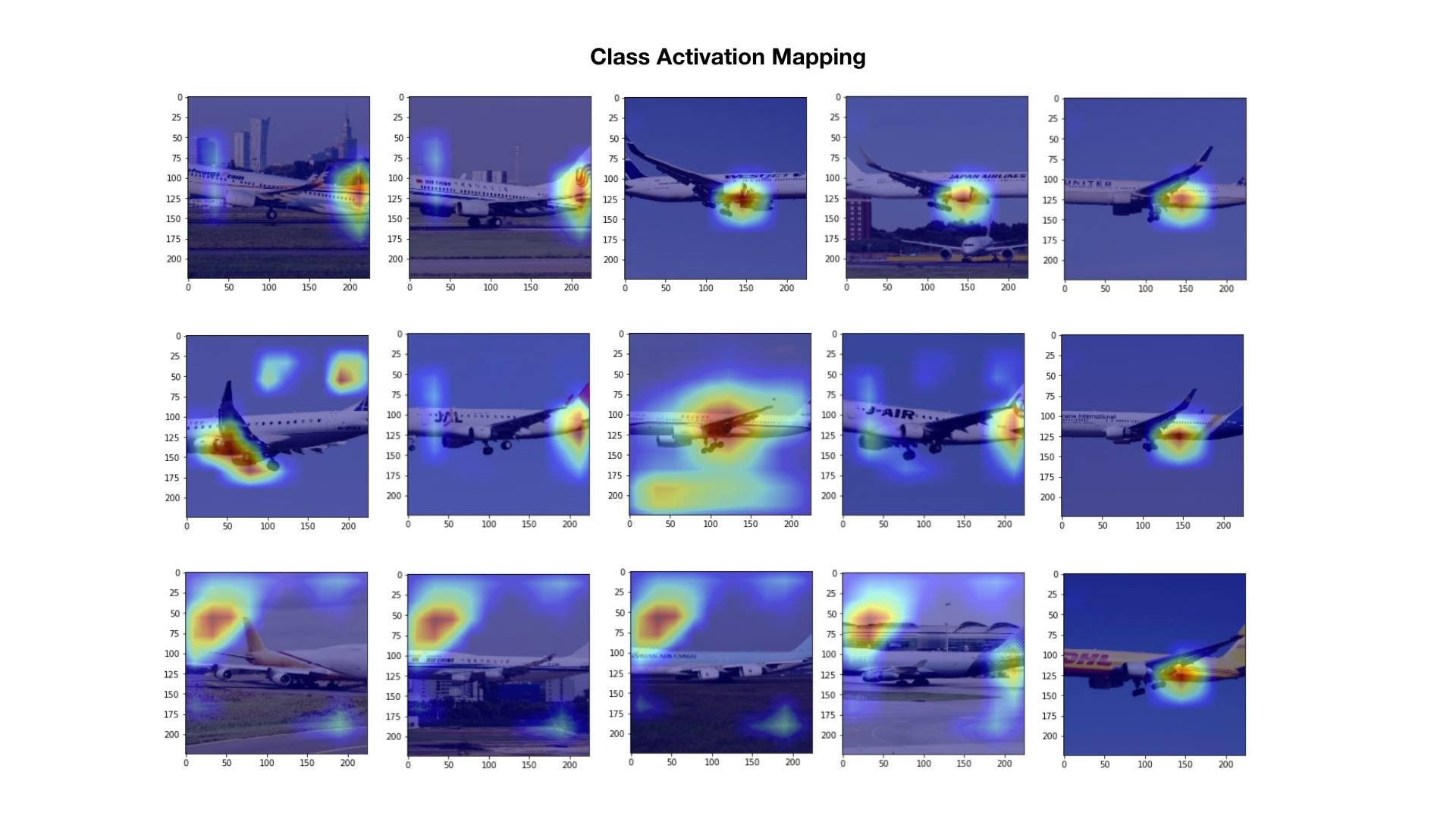

The interesting part was visualizing what the network actually learned using class activation mapping. The heatmaps below show which parts of each image the model focuses on when making a prediction:

It turns out the model learned to look at the tail, the engine and gear, and small details on the fuselage, which are the same features a human spotter would use to tell planes apart.

The last row of images reveals something else: how training data biases creep in. The model “learned” that a 747 is usually on the ground taxiing, because that happens to be the majority of photos of it in the training set. When most of the data shows a plane in one context, the model picks up on the context instead of the plane itself.

Music Embedding Space

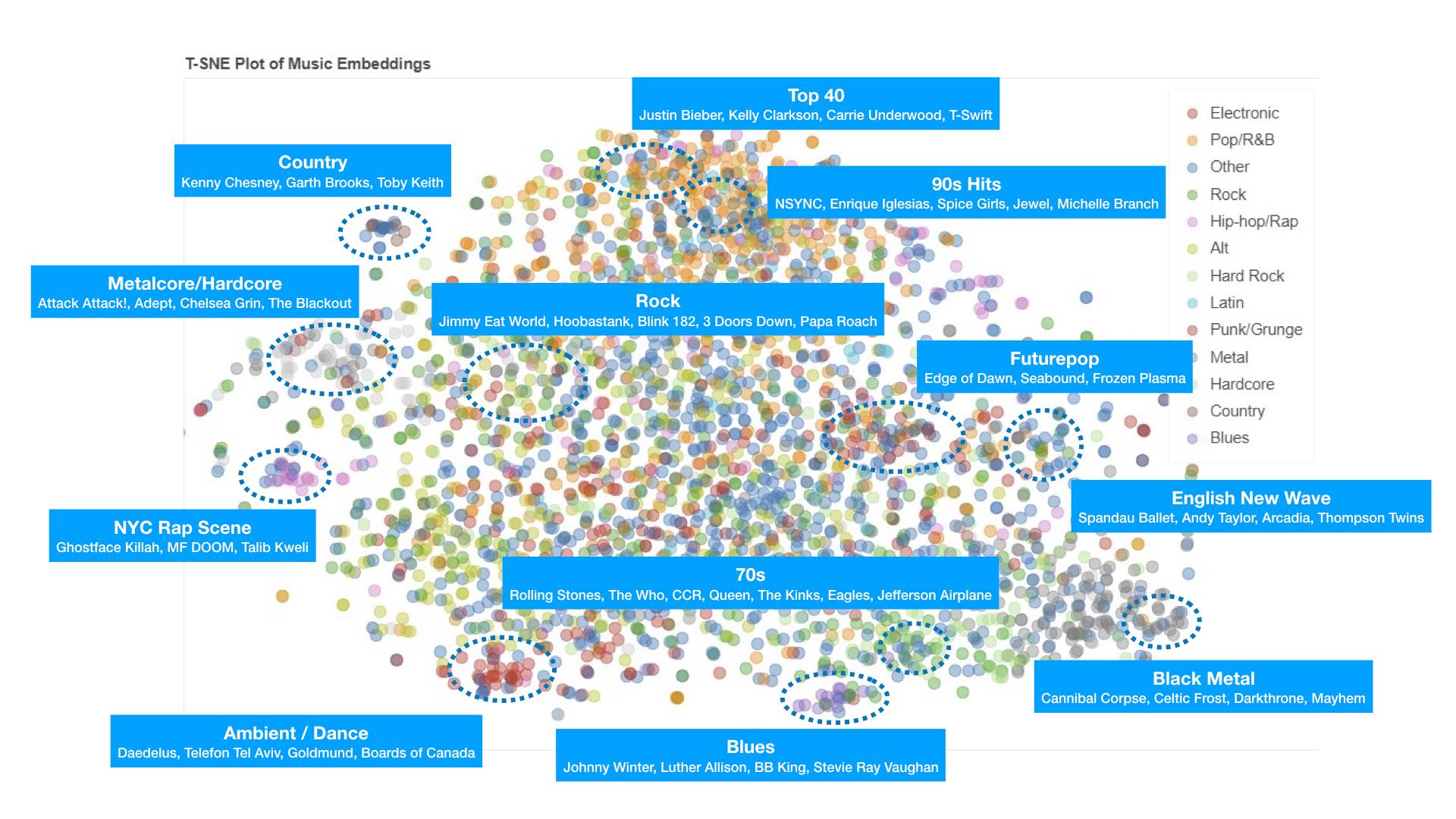

Next, I went to Last.fm and grabbed their public data around which artists different users listened to over a few years. The only input was user-artist pairs. No genre labels or metadata were used. I then trained a 50-dimensional embedding space on this data, and by observing the listening habits of thousands of people, the model discovered musical genres entirely on its own.

The visualization above is a 2D T-SNE projection of that 50-dimensional space. Artists that cluster together turned out to share genres, and the clusters mapped cleanly onto categories like country, metalcore, ambient, NYC rap, English new wave, and others. The model was so good at picking up on genre nuances that I ended up learning about sub-genres of music I’d never heard of while debugging!